Sydney-based AI engineer with 15+ years of experience spanning enterprise data engineering, workflow automation, and production AI systems.

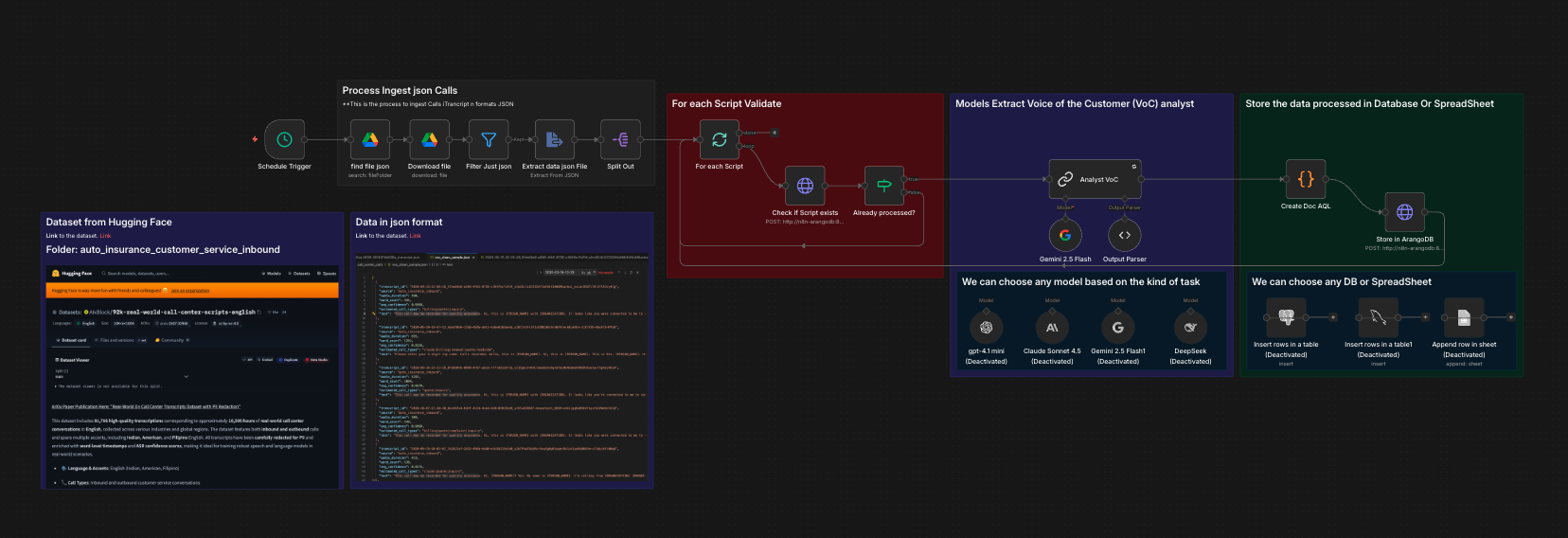

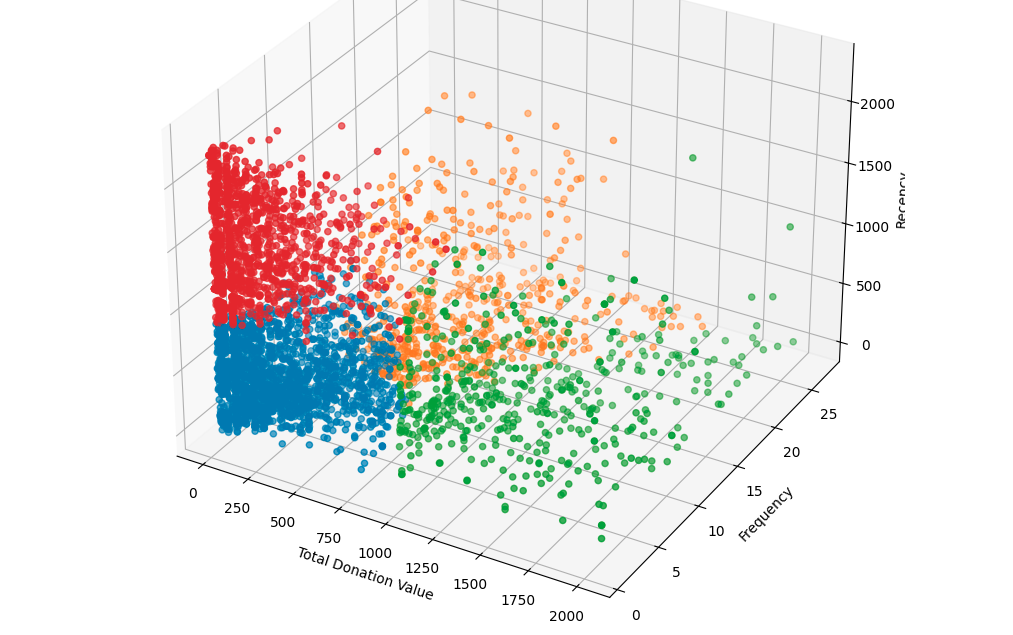

I build intelligent systems that turn unstructured data into business value. My current work focuses on designing and deploying LLM-powered pipelines, multi-agent architectures, and RAG (Retrieval-Augmented Generation) systems for Australian businesses — from recruitment and financial services to the not-for-profit sector.

What I'm Building Right Now

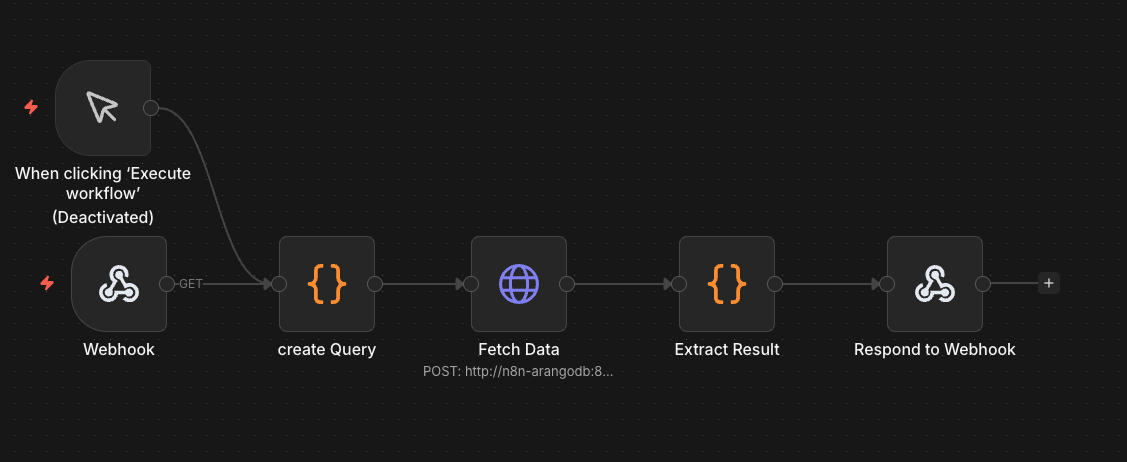

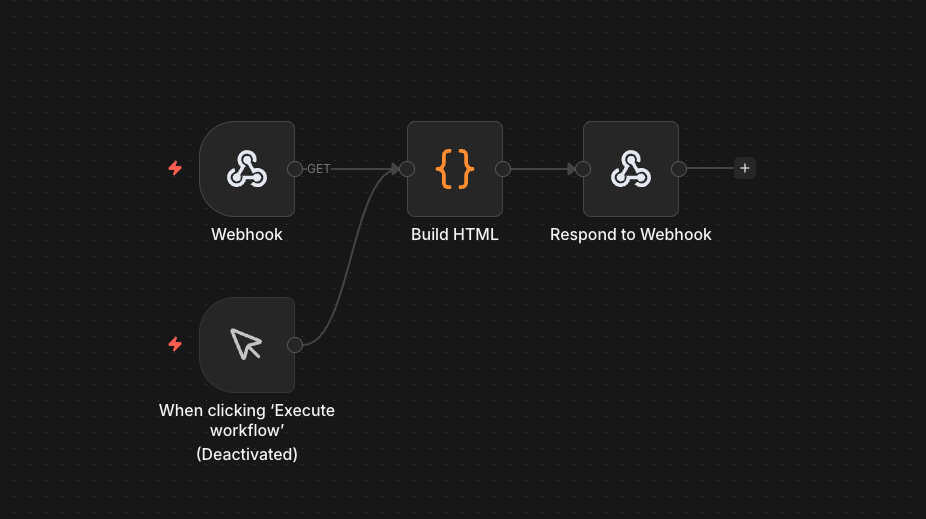

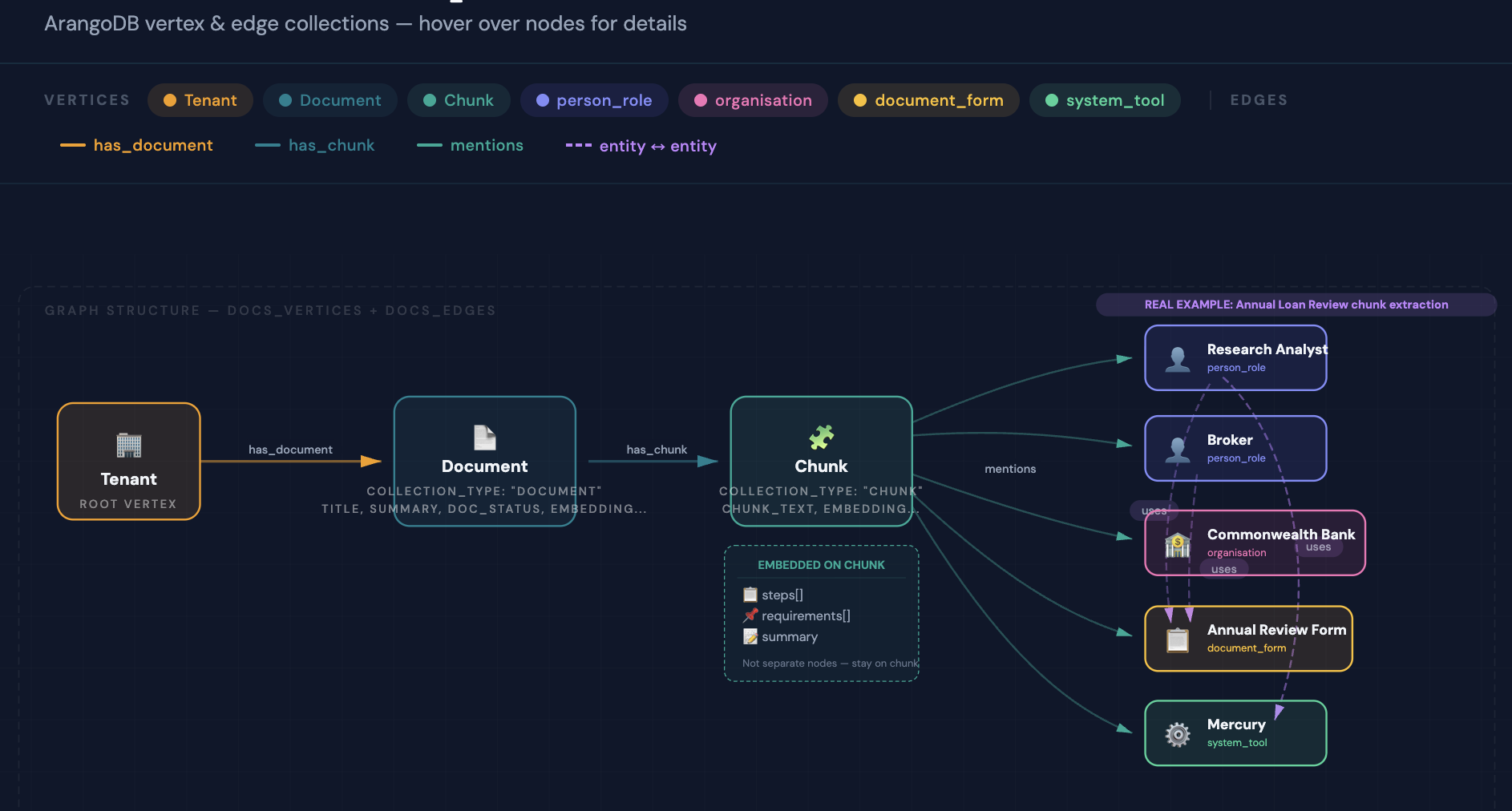

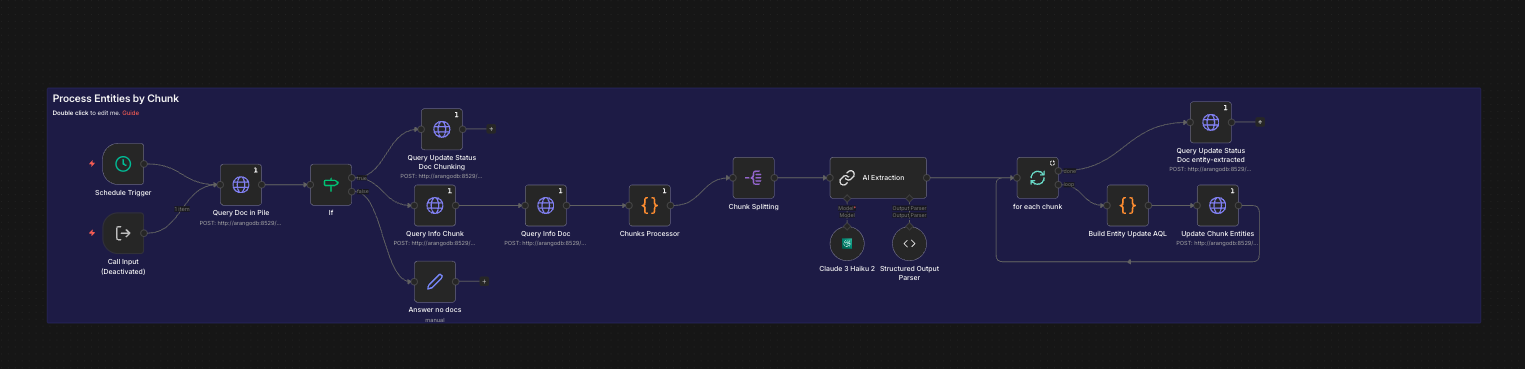

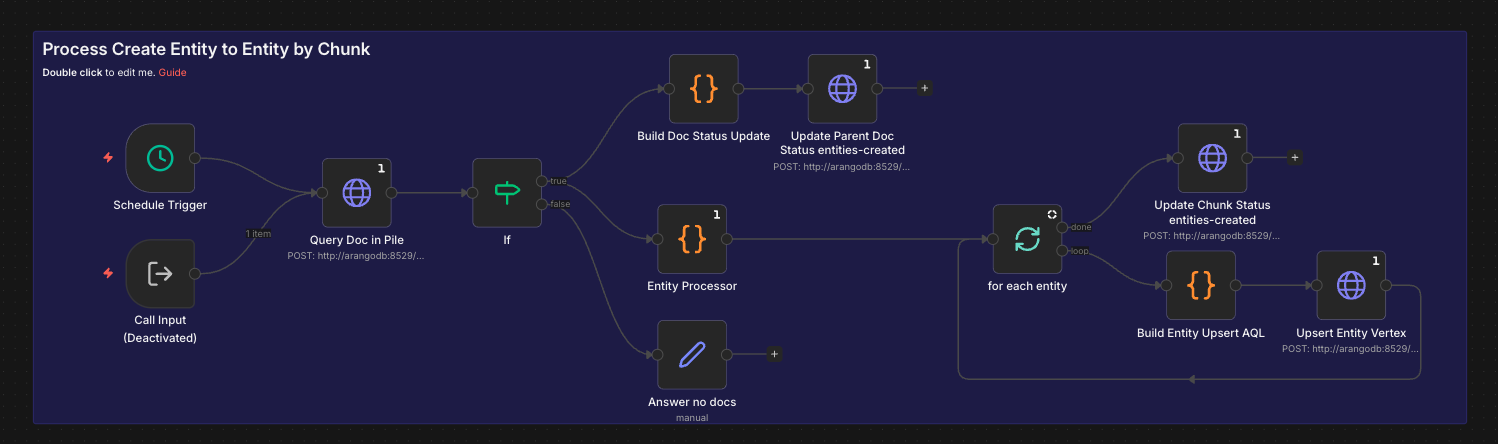

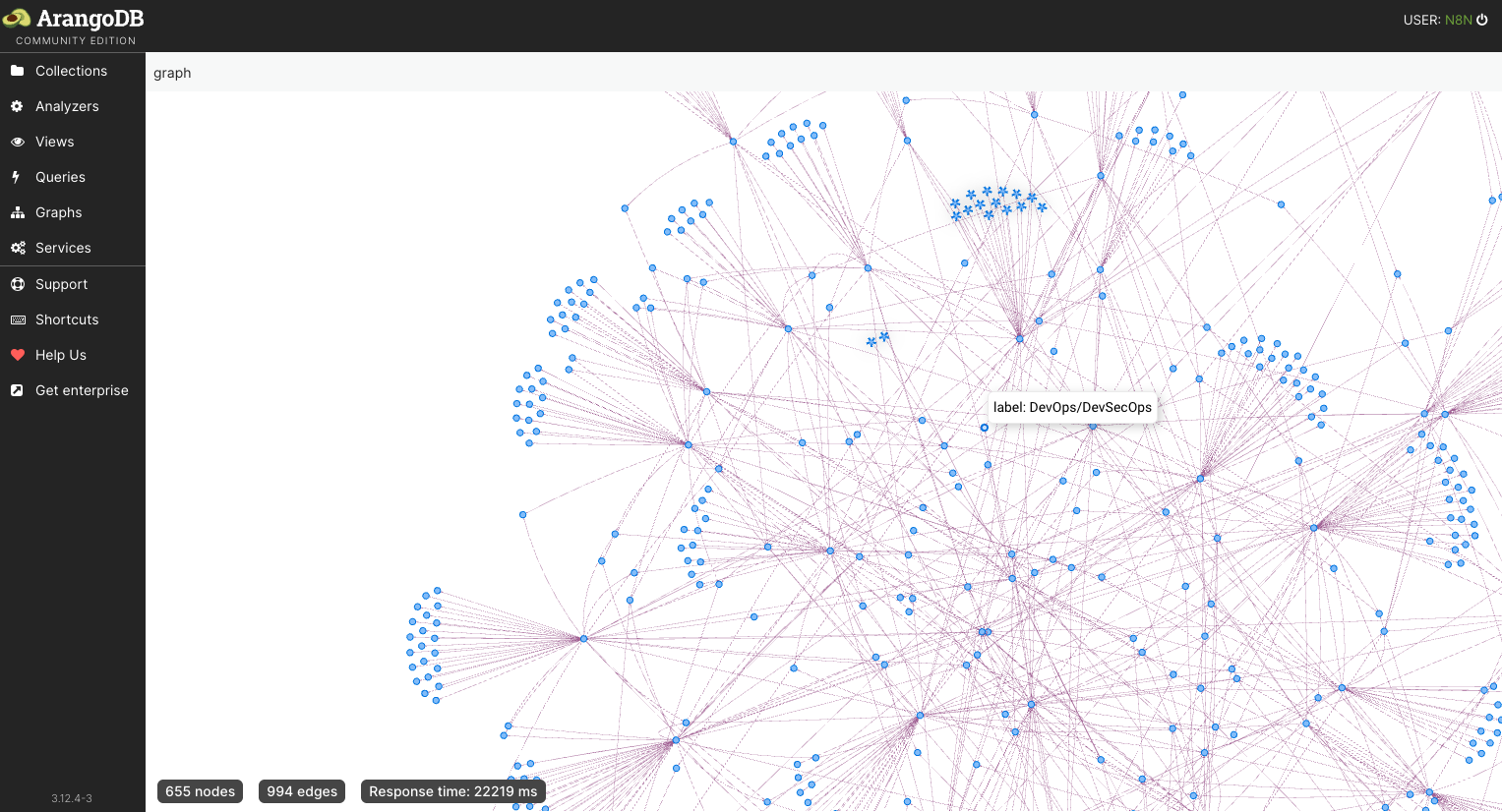

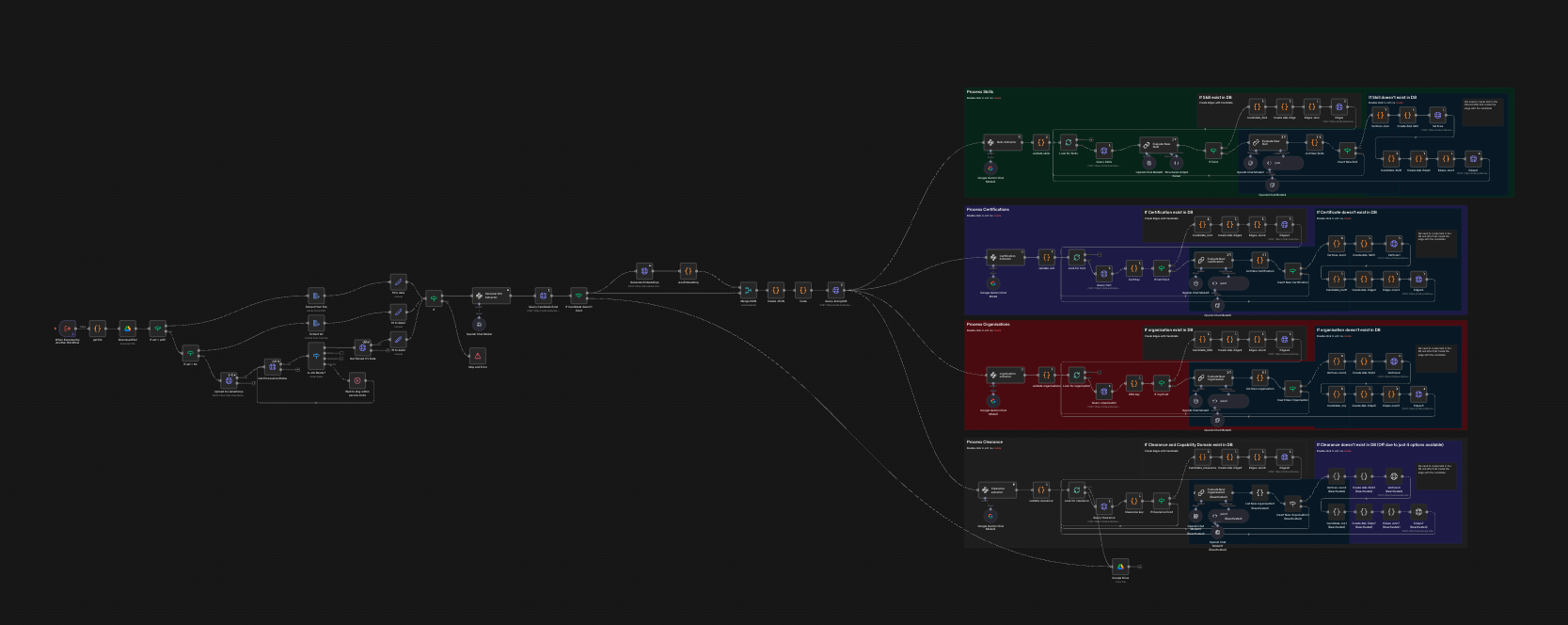

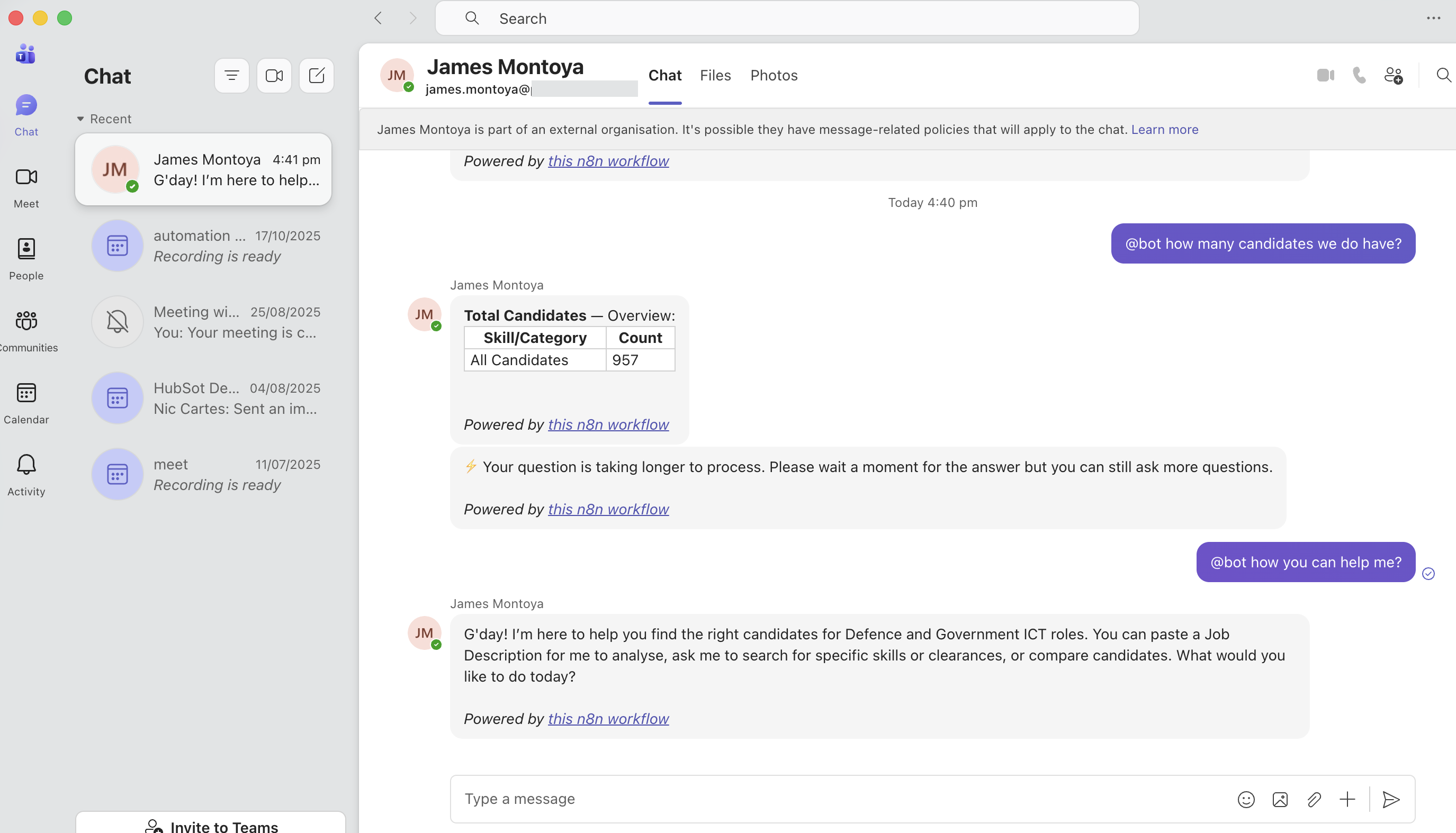

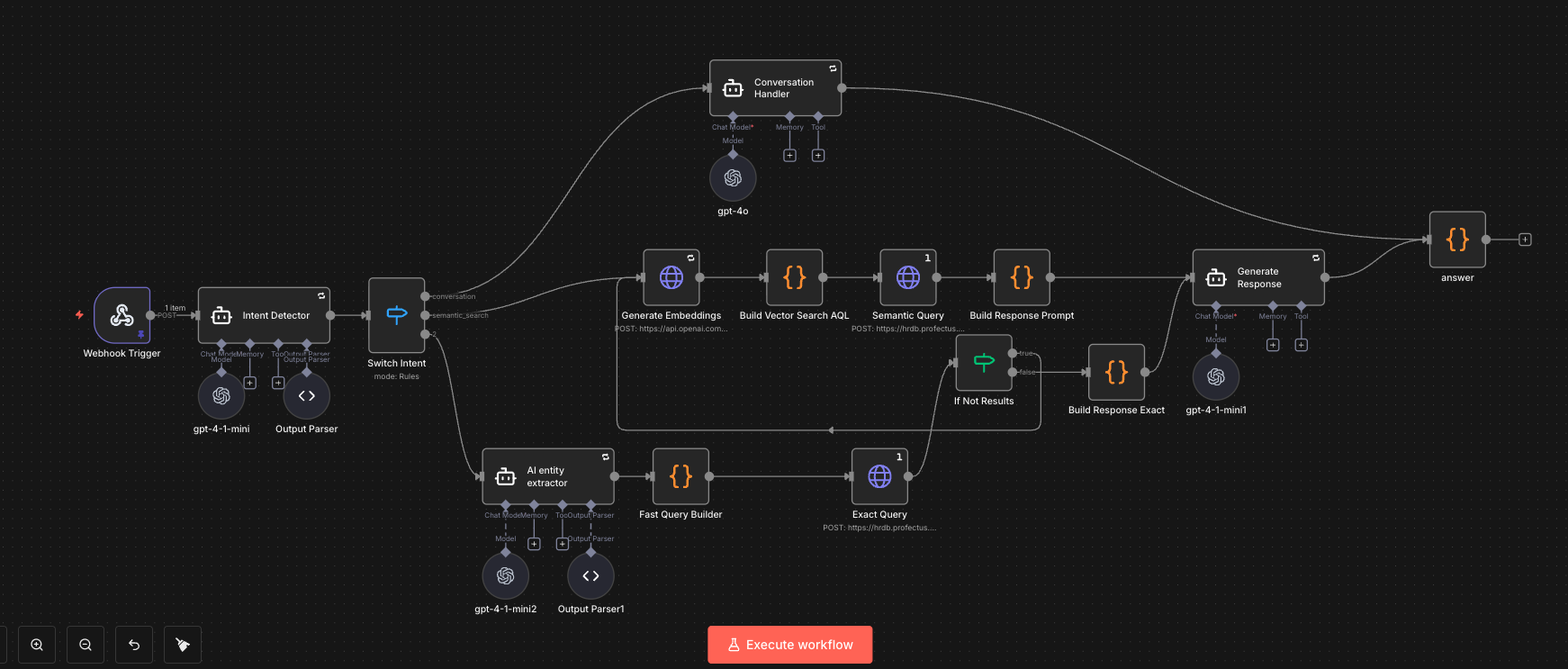

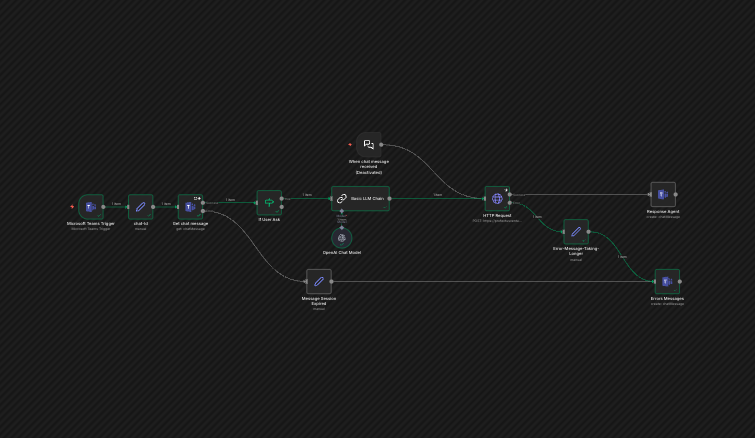

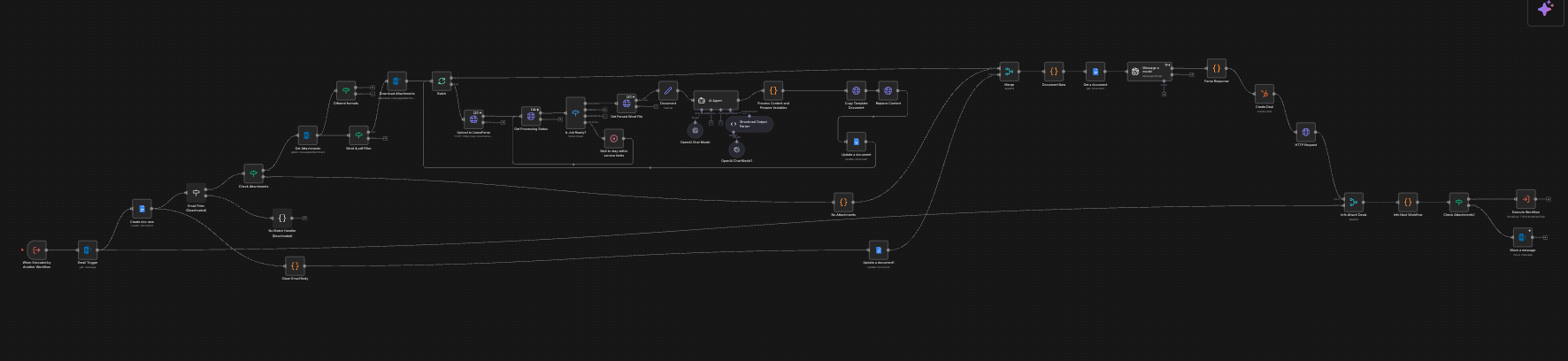

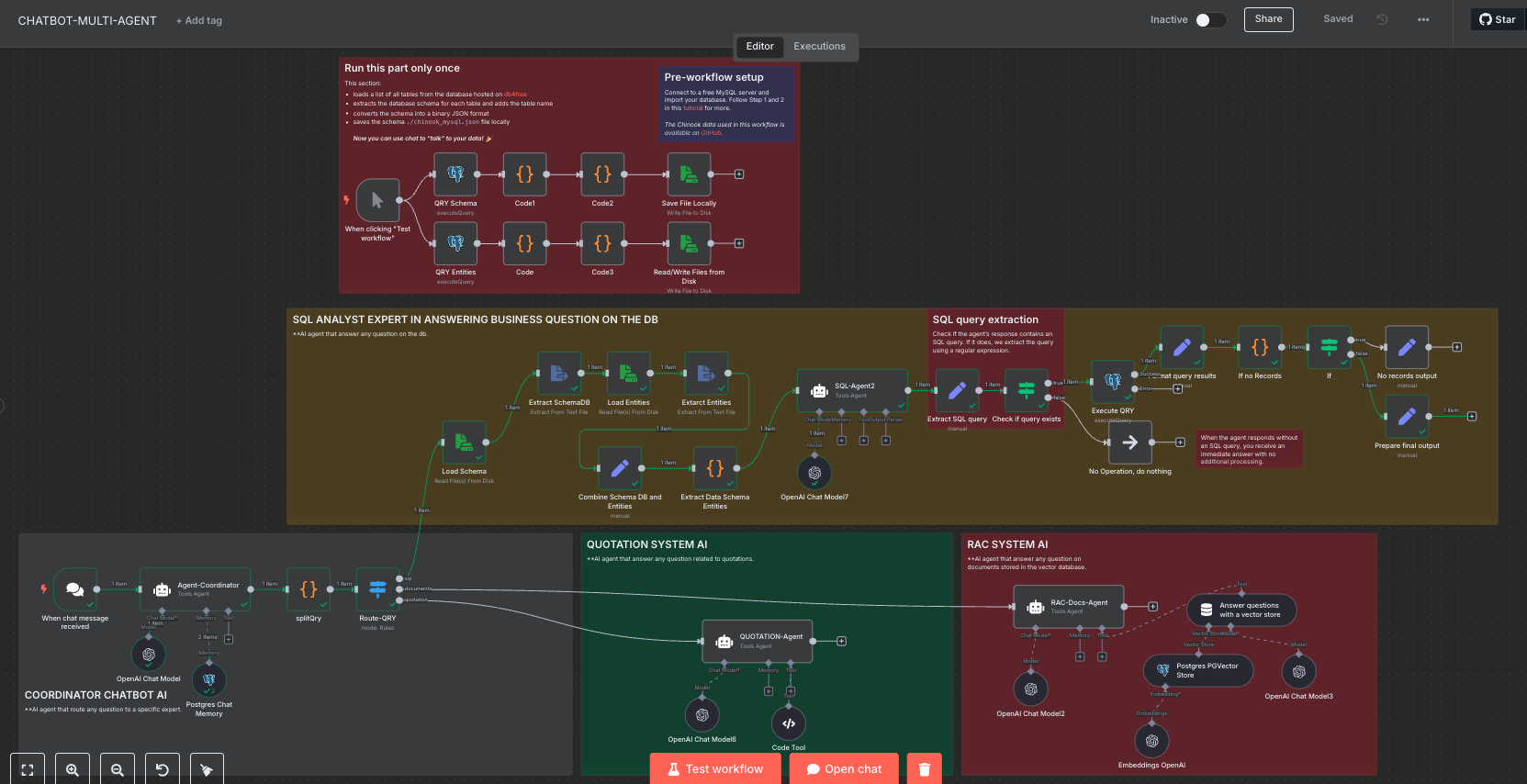

For a Defence & Government recruitment firm, I designed and built an end-to-end AI recruitment pipeline: CV ingestion, structured data extraction (skills, security clearances, capability domains), graph-based storage in ArangoDB, and a conversational AI agent accessible through Microsoft Teams. The system uses a multi-agent architecture — router, query builder, response formatter, and validator — orchestrated through n8n, enabling recruiters to find candidates through natural language queries instead of manual database searches.

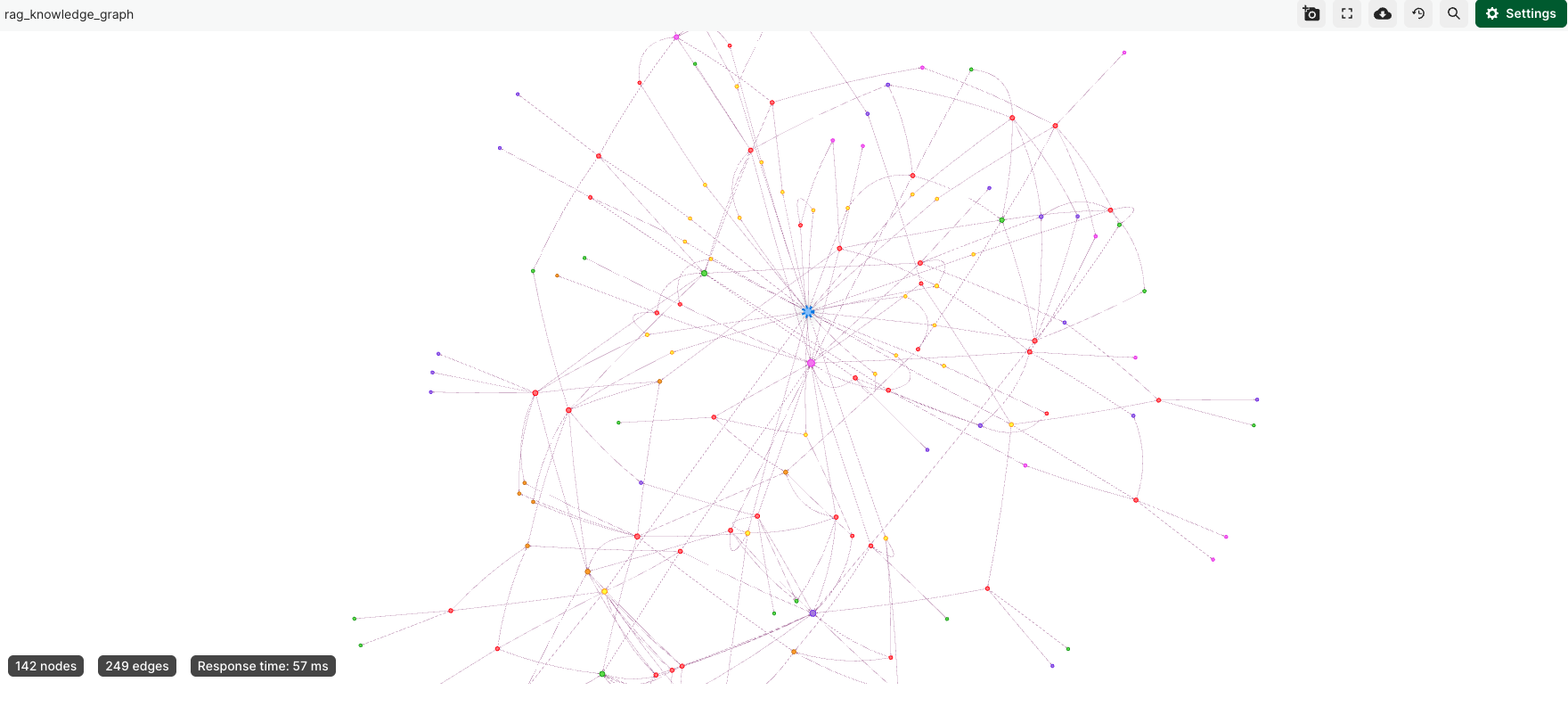

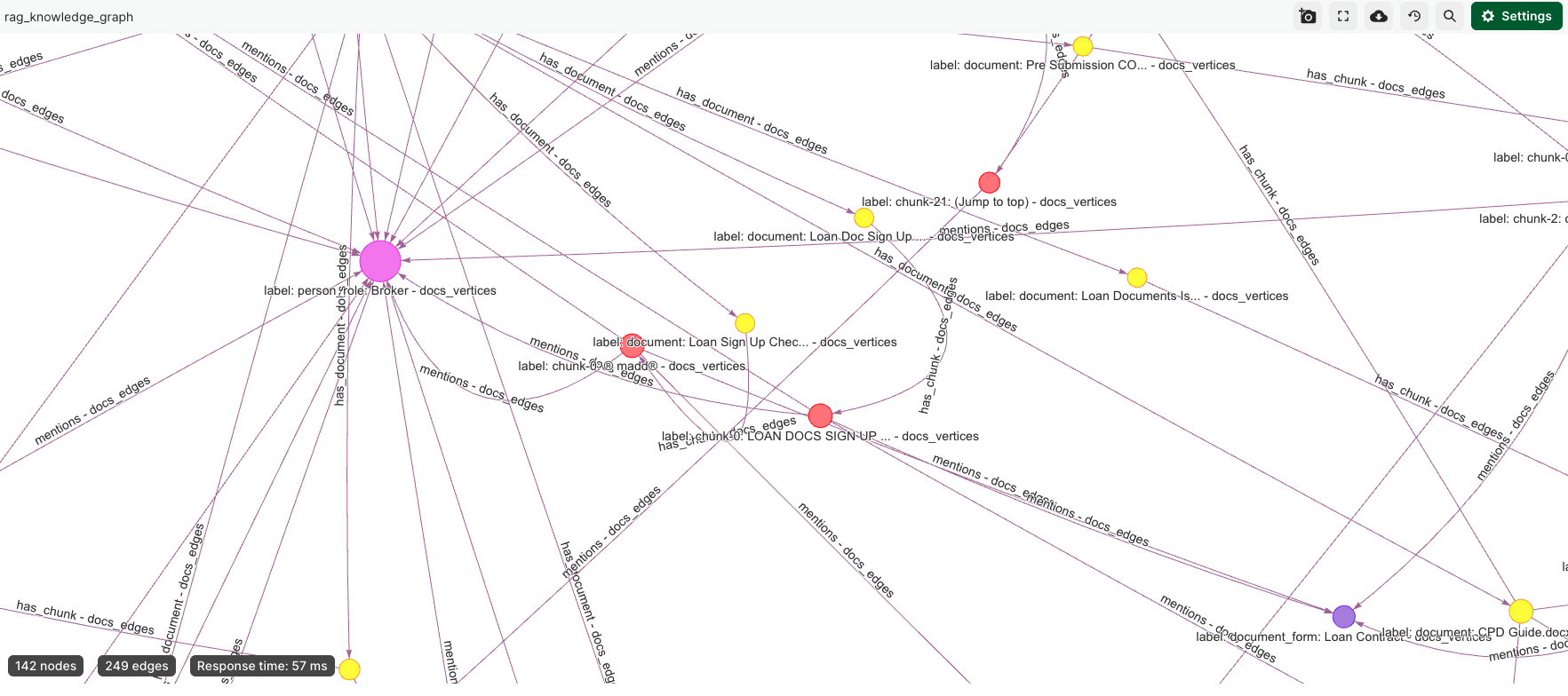

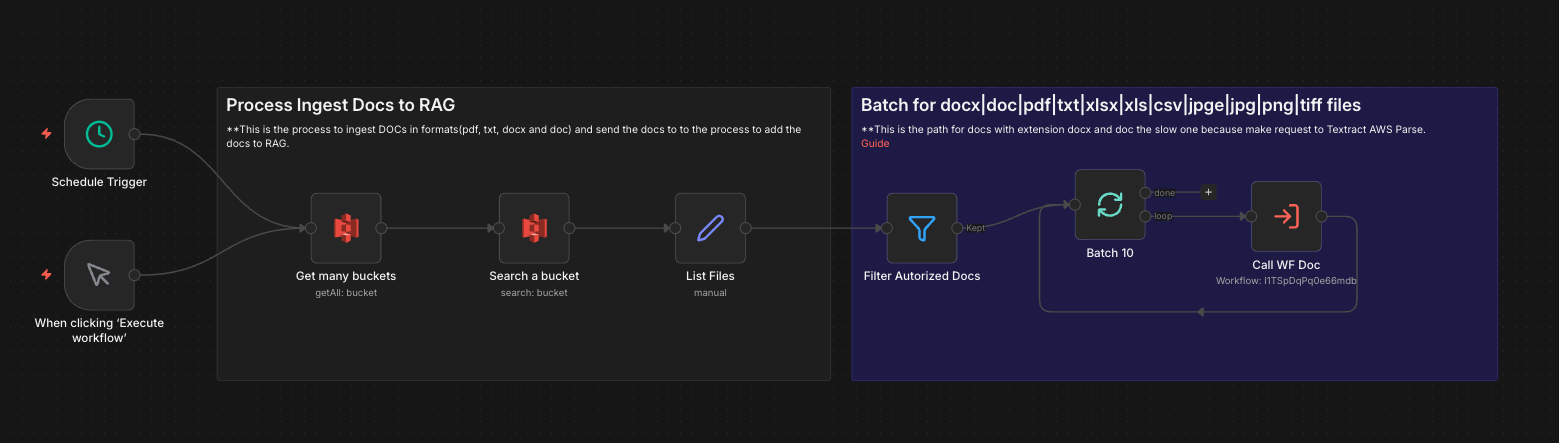

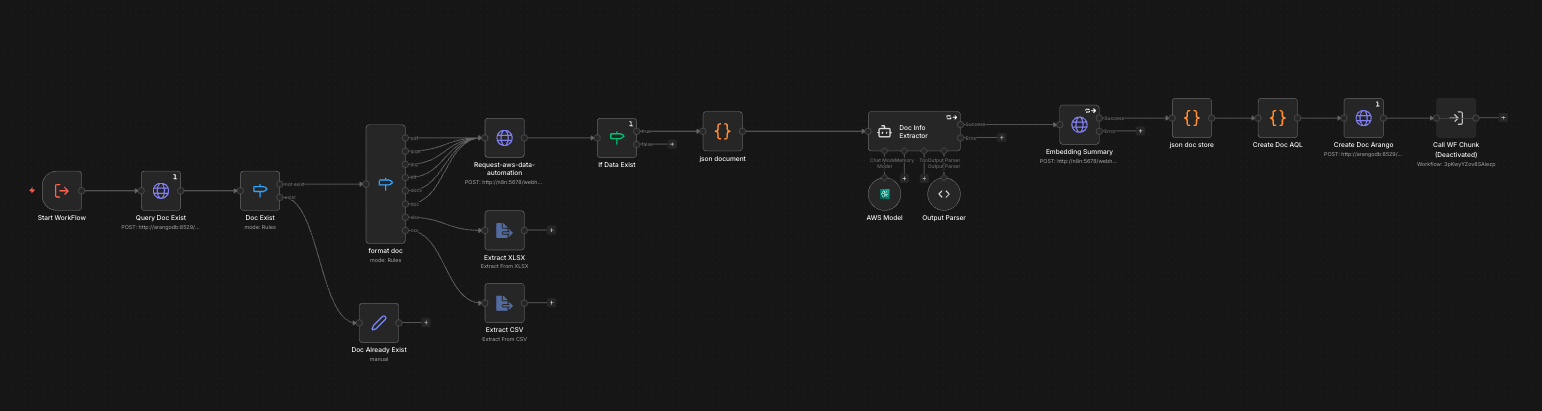

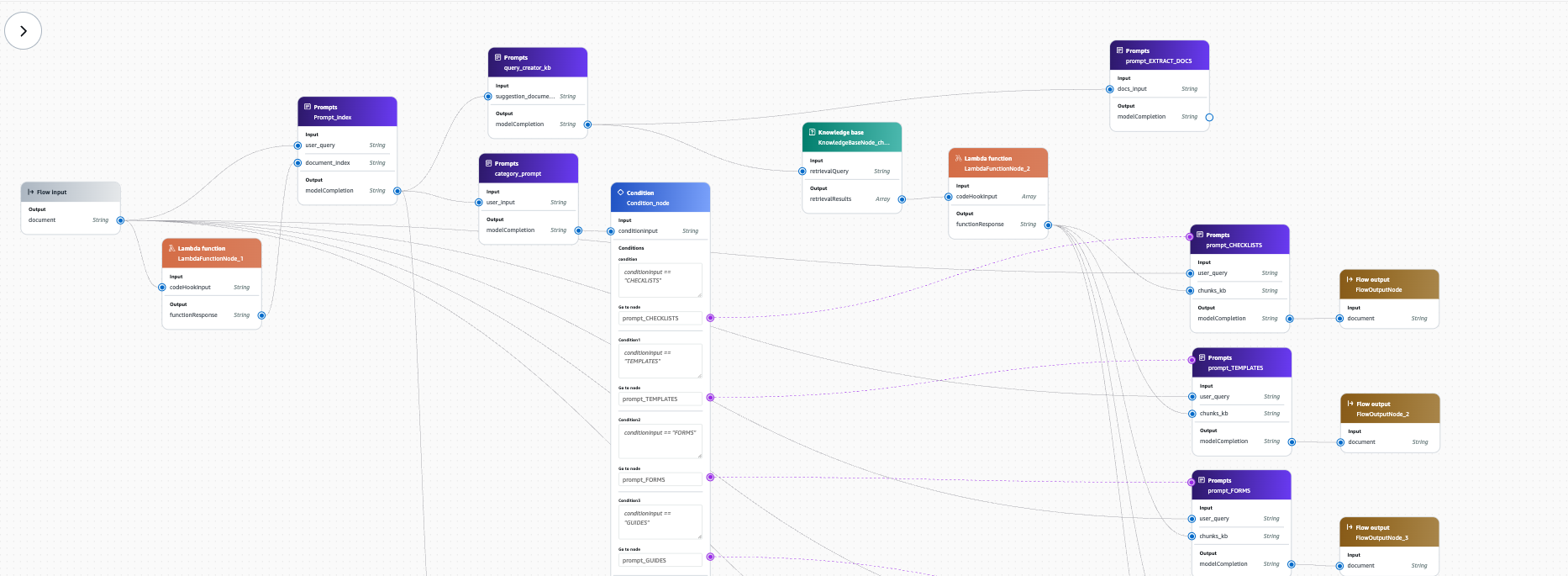

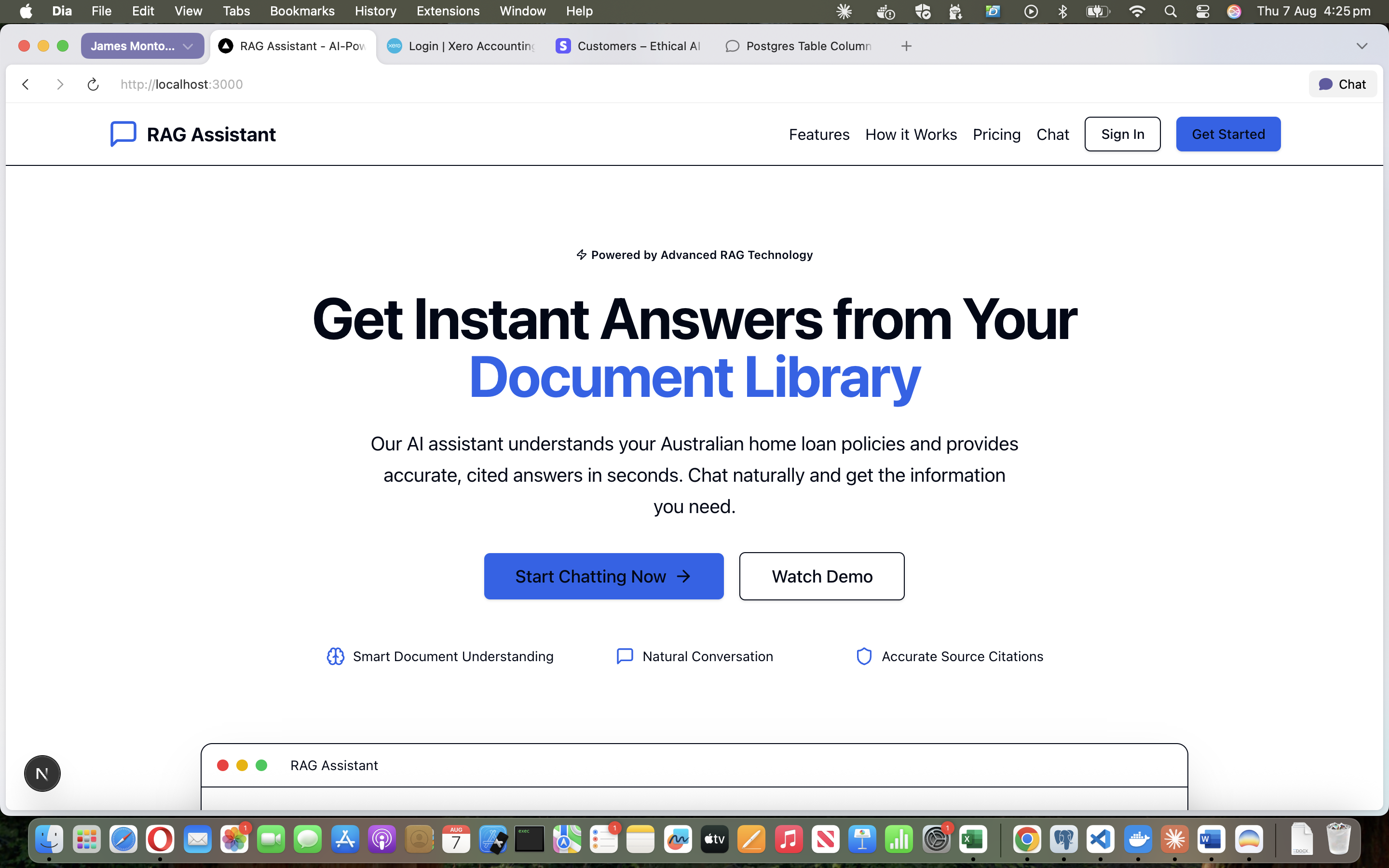

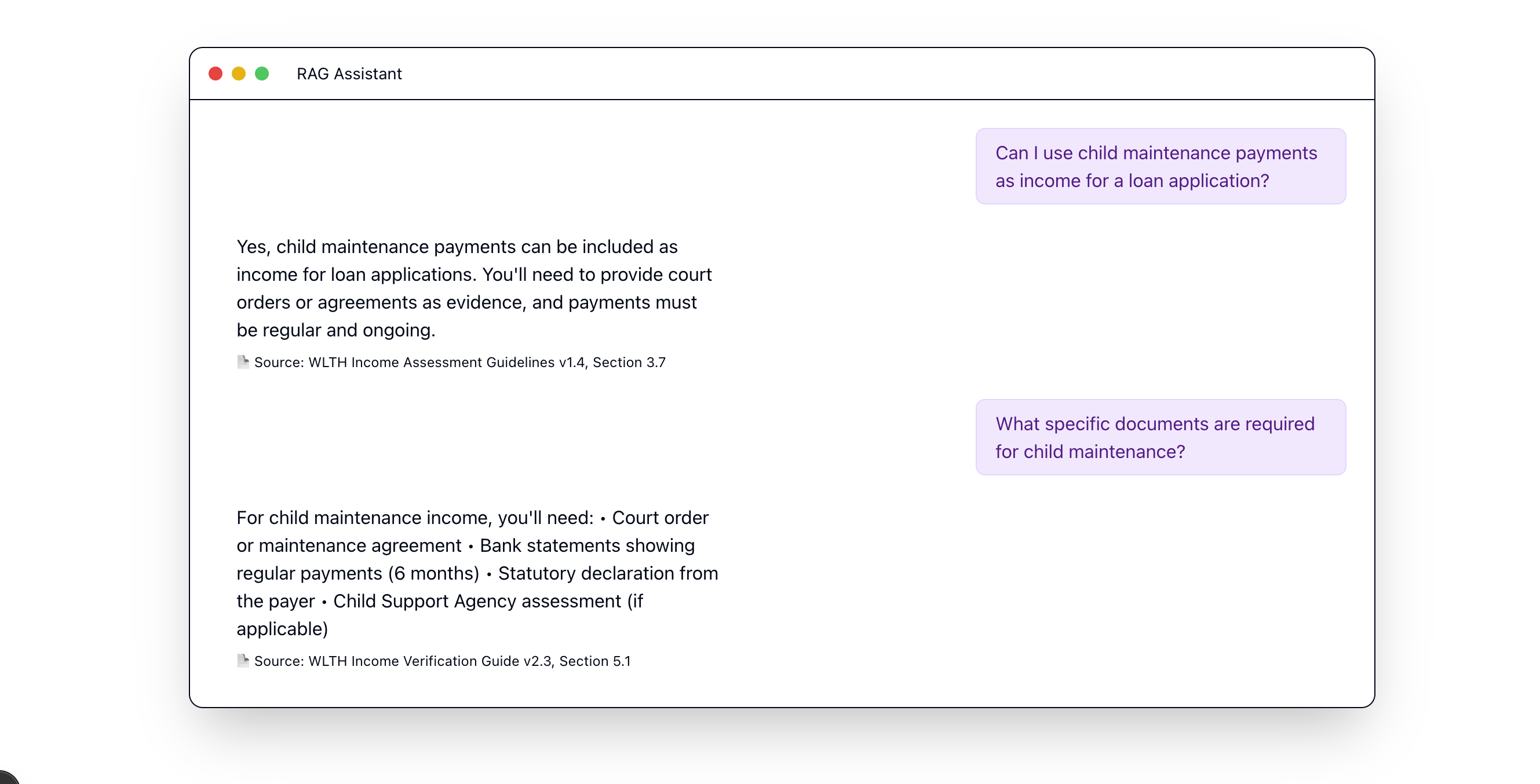

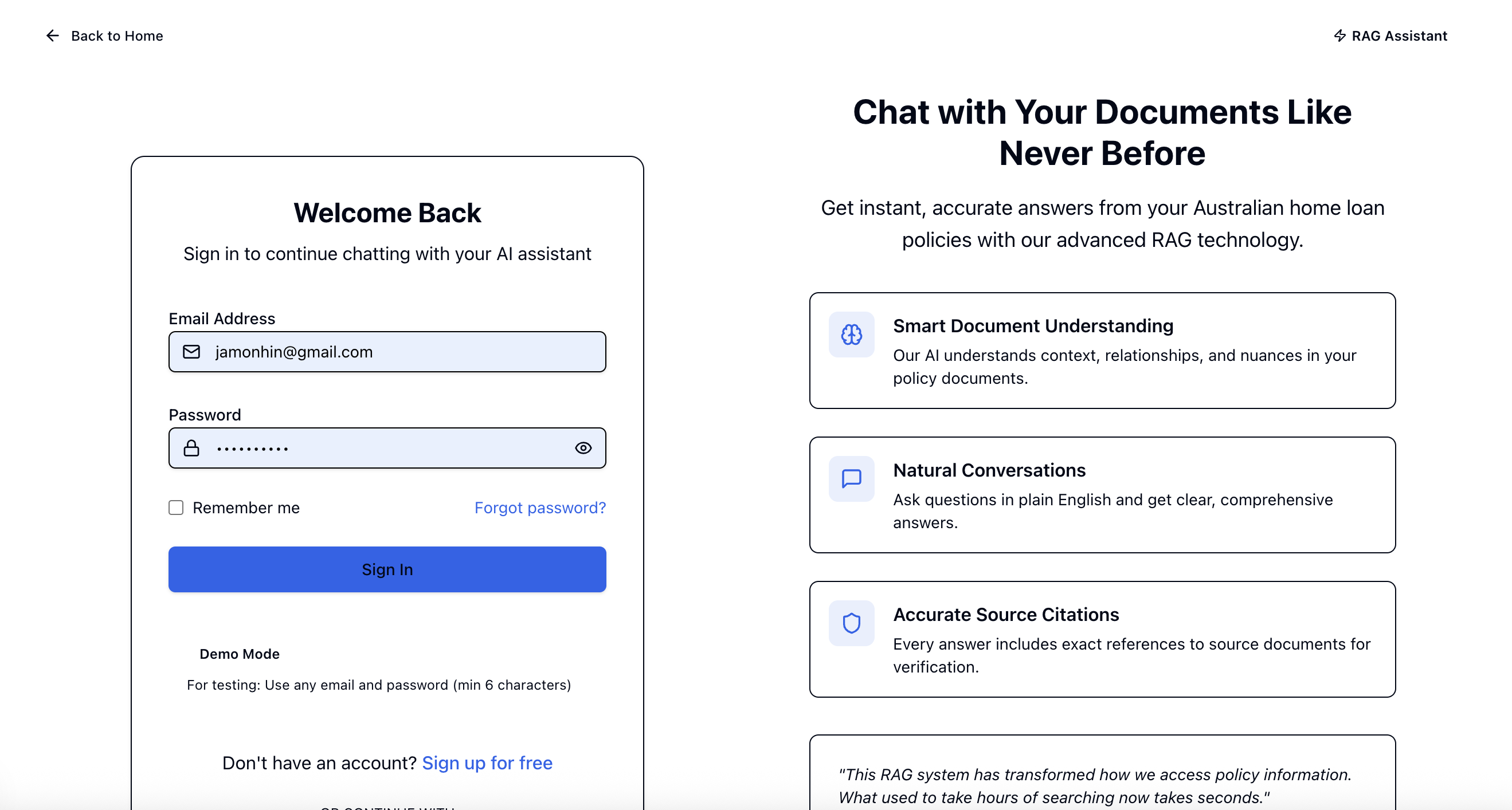

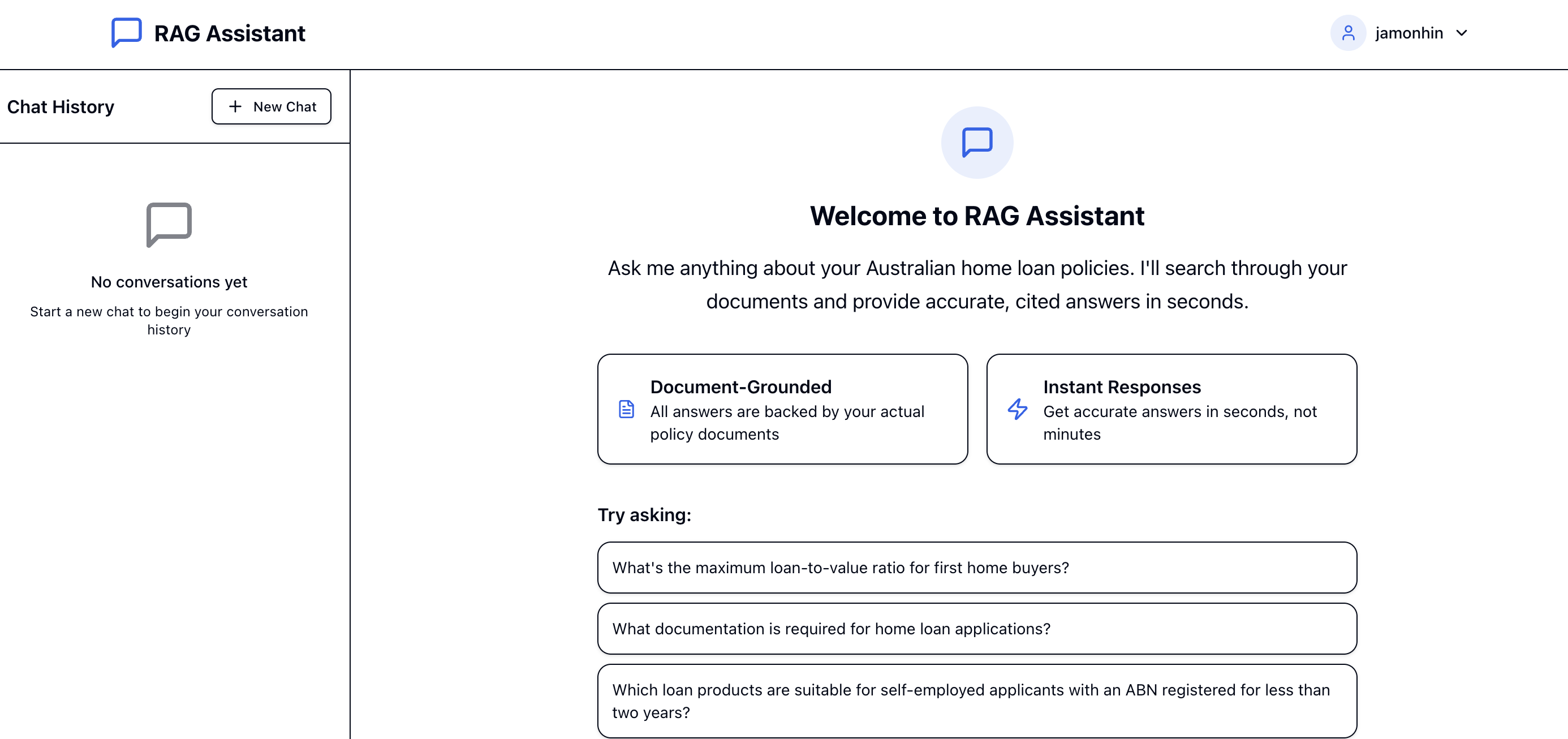

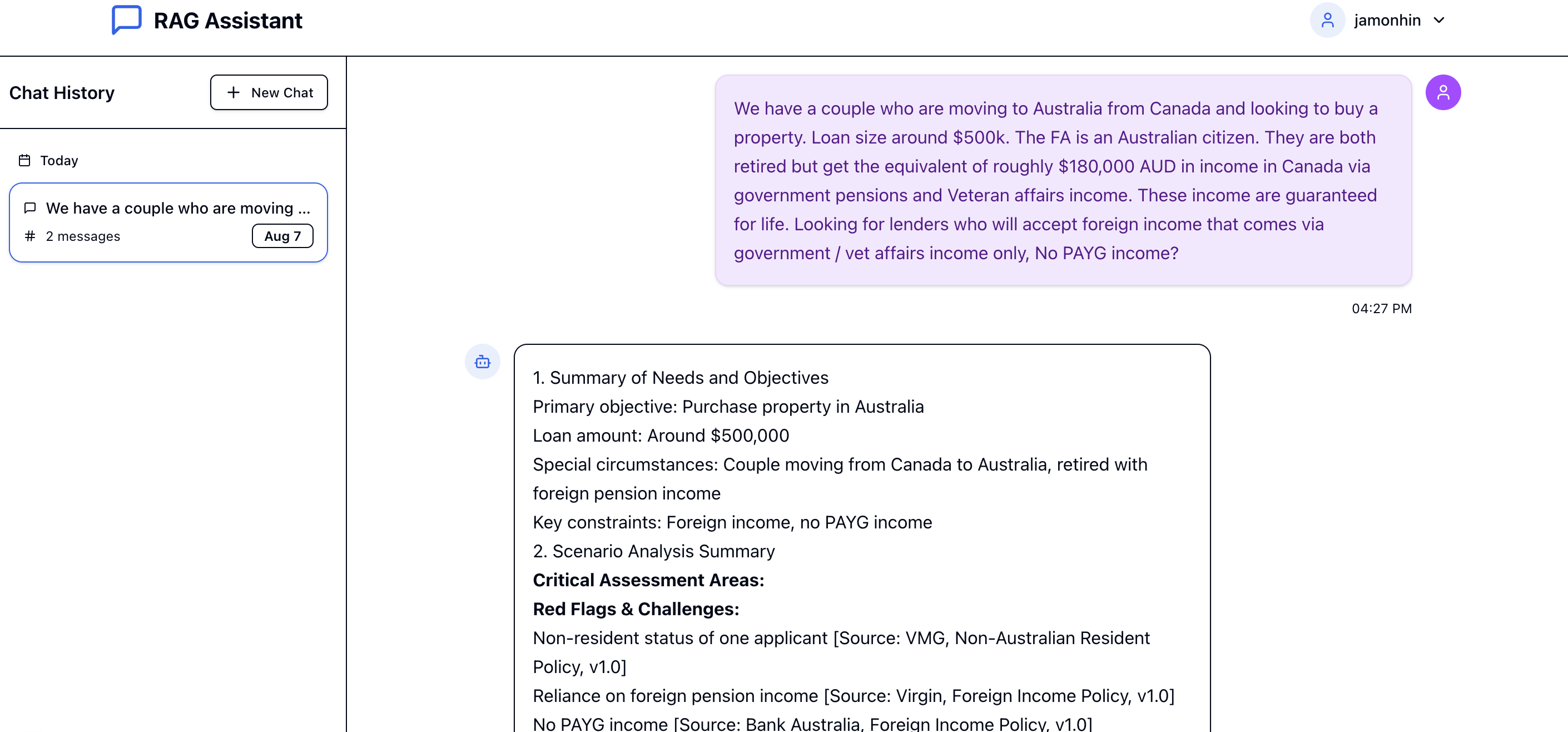

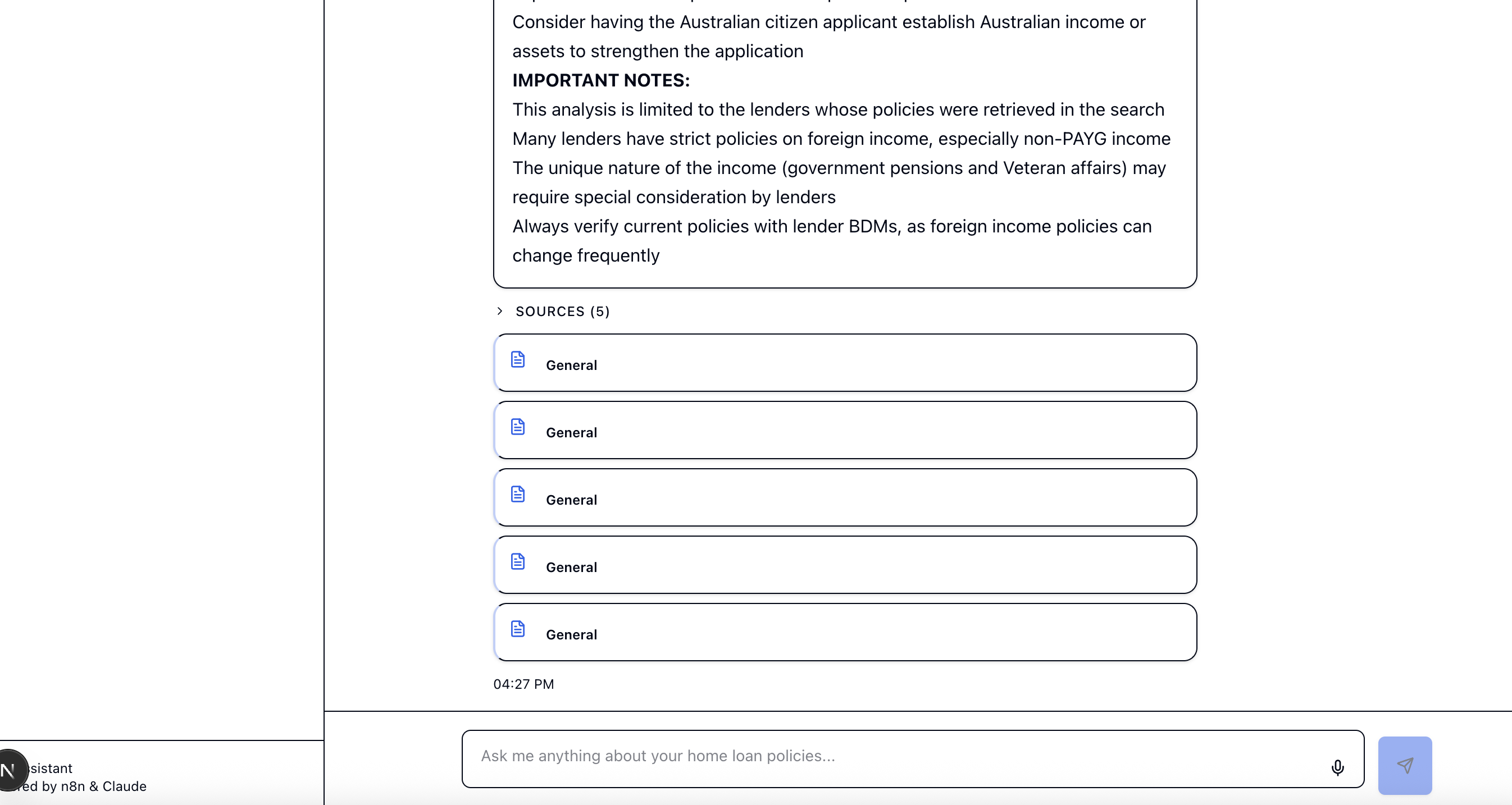

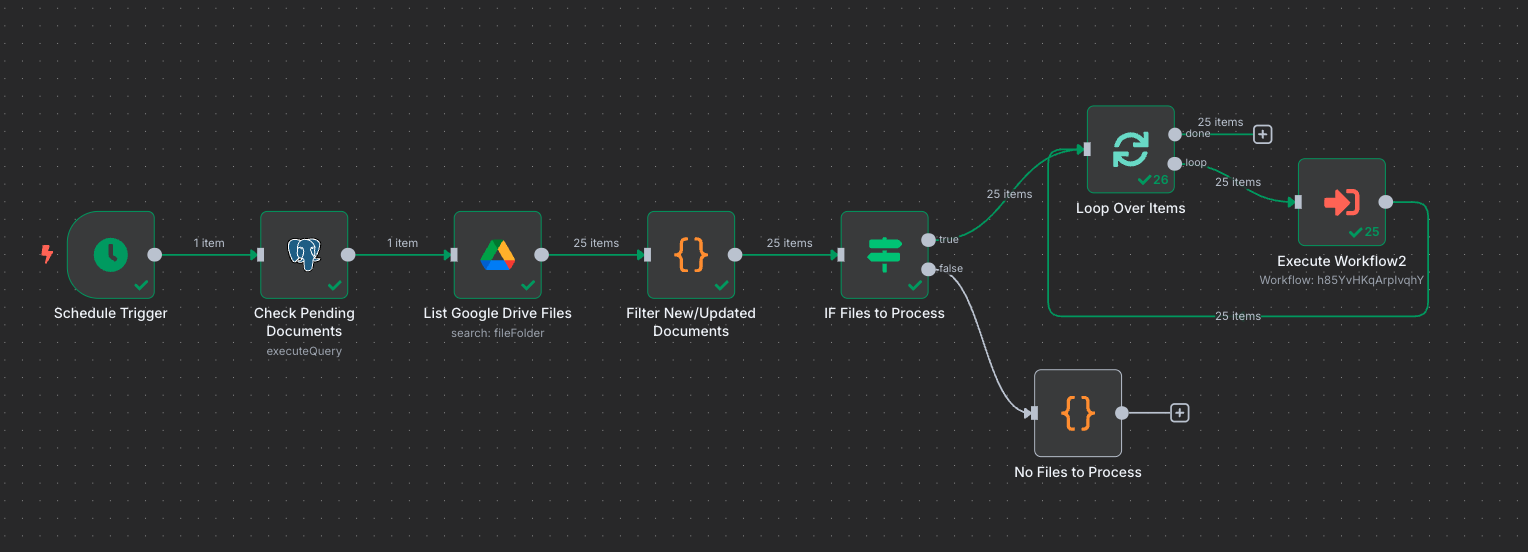

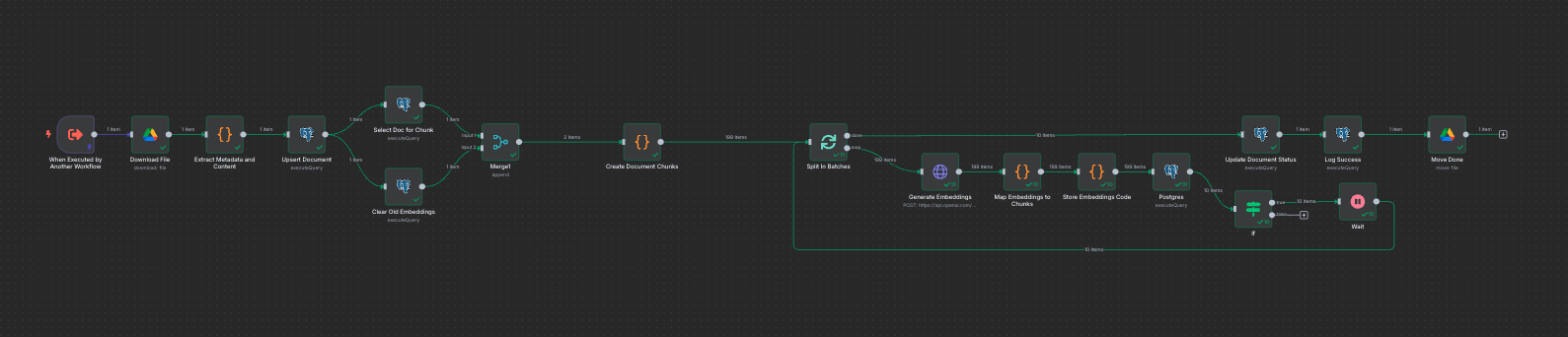

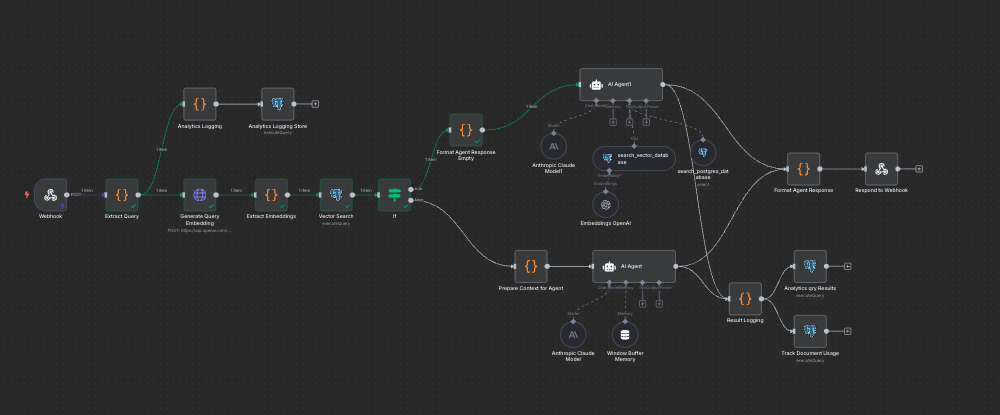

For eThink Solutions — an award-winning Australian custom application and BI company whose clients include Lyft, GrainCorp, and DP World — I'm building a RAG system that enhances their mortgage broker CRM with document intelligence. The platform processes 150+ loan documents with 95%+ accuracy, classifies and chunks content at granular levels, maps relationships between banks, processes, and compliance requirements through a graph model, and lets staff query everything through natural conversation. Manual document lookup time has been reduced by 80%.

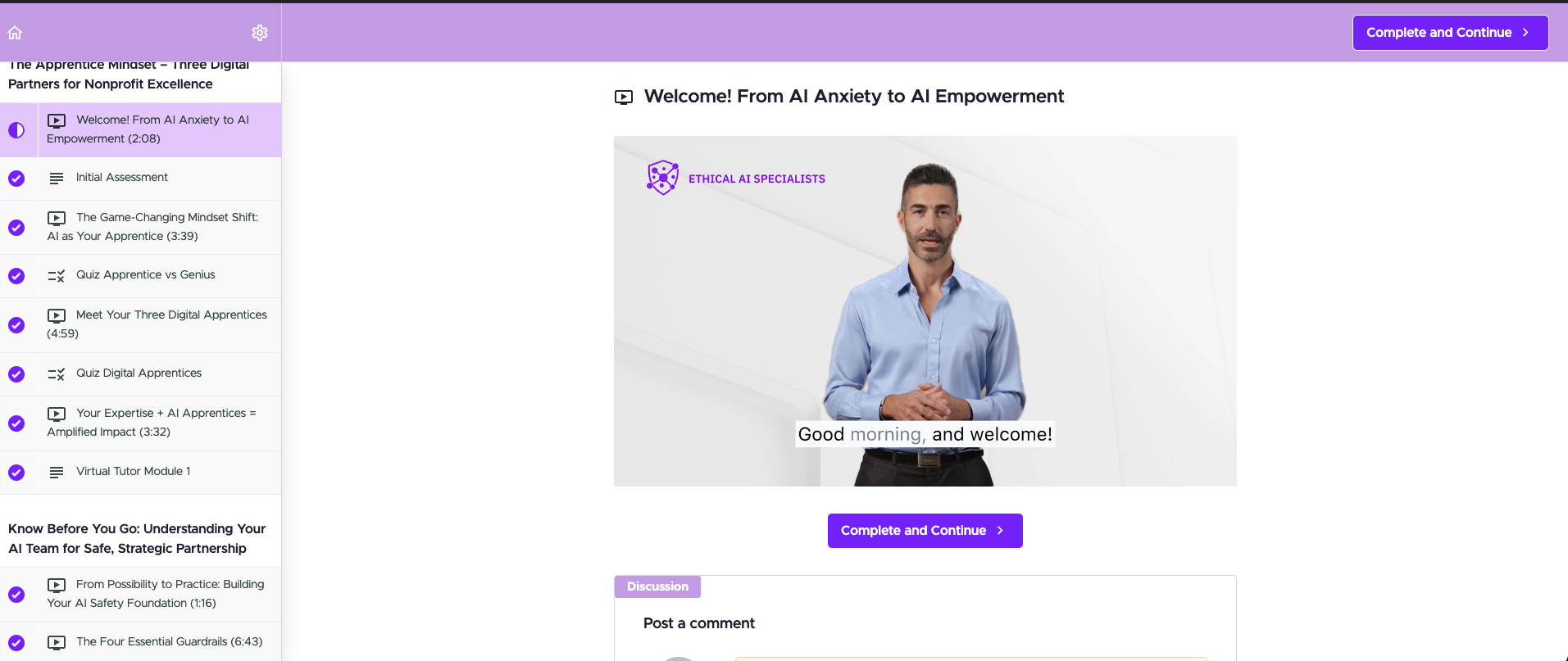

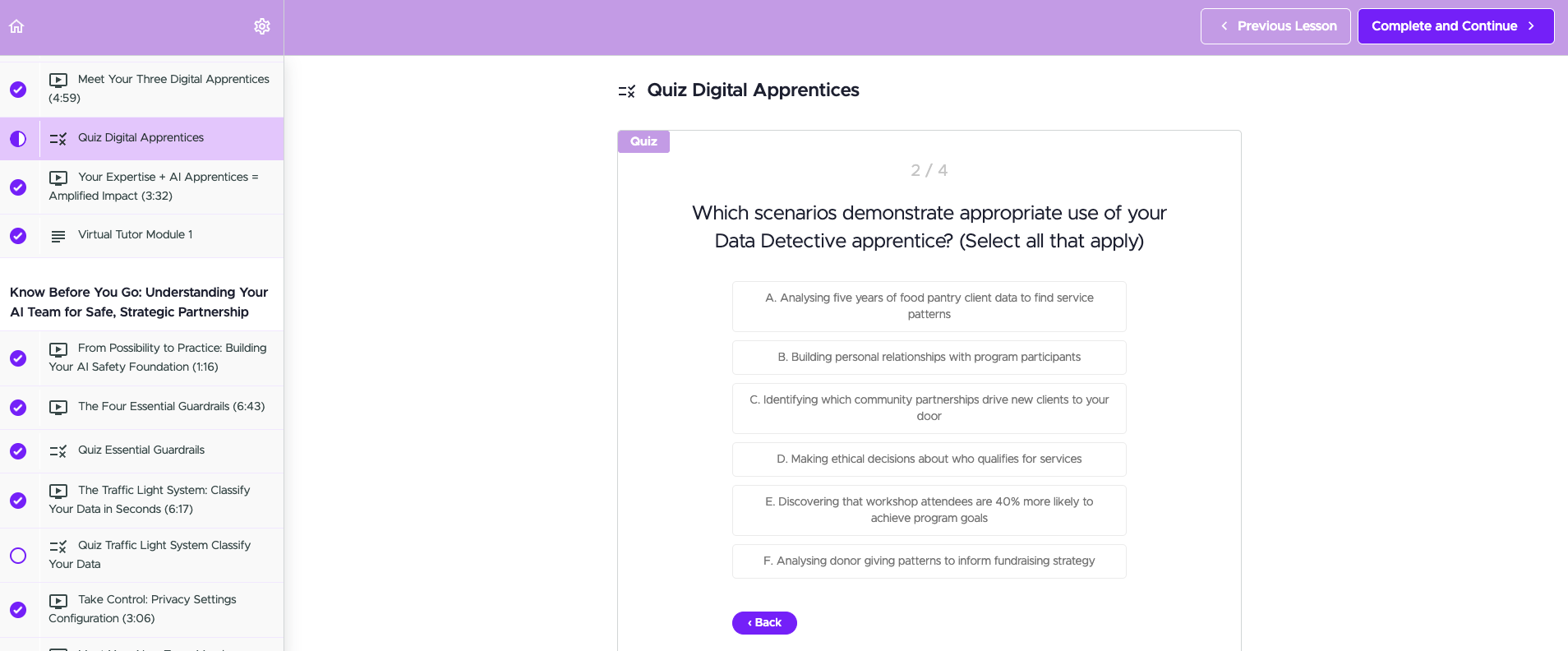

For not-for-profit organisations, I develop and deliver AI training programs that make AI accessible to non-technical teams, covering safe adoption frameworks, data governance, and practical tool implementation.

Core Technical Stack

- LLM Integration & AI: Claude (Anthropic API), OpenAI, Gemini, Grok, Ollama, Amazon Bedrock models, prompt engineering, multi-agent orchestration, RAG systems

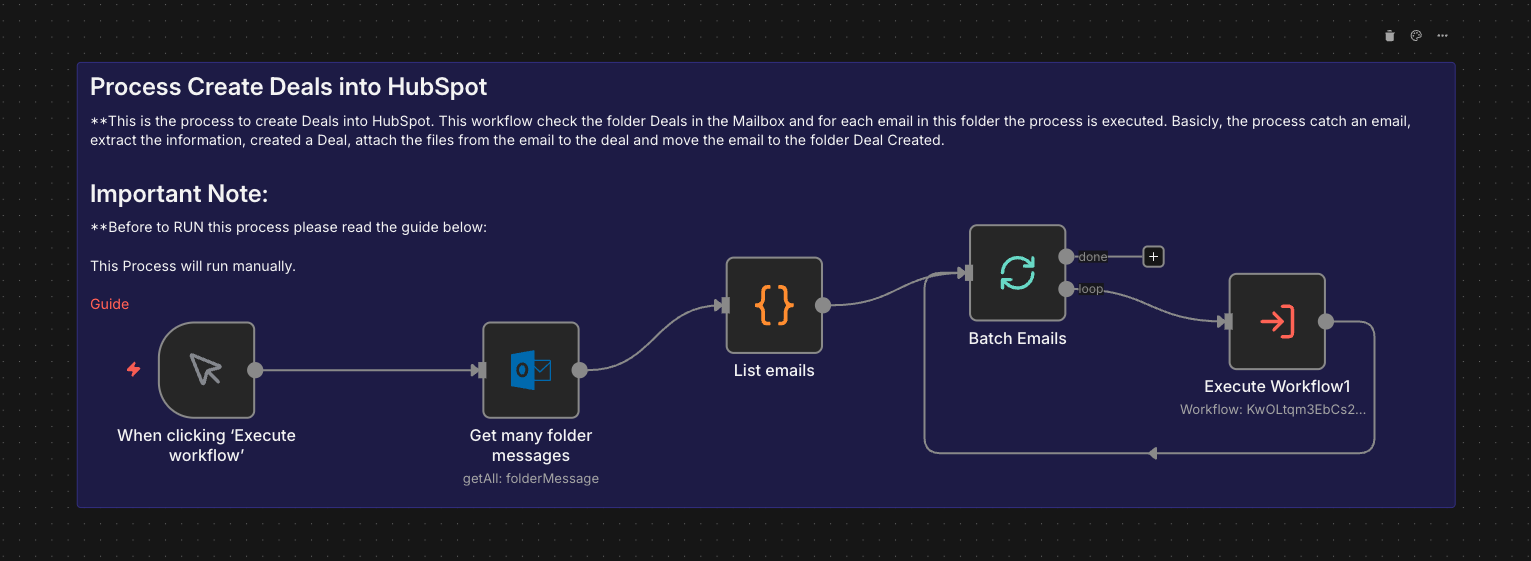

- Workflow Automation: n8n (self-hosted, production-grade), end-to-end pipeline design and deployment

- Data & Databases: ArangoDB (multi-model: document, graph, vector search), PostgreSQL, advanced SQL, ETL/data pipeline architecture

- Cloud & Infrastructure: AWS (EC2, S3, Bedrock), Docker, Traefik, serverless architectures

- Integrations: HubSpot, Salesforce, Azure, Google APIs, any system with API connectivity

- Frameworks & Tools: n8n, CrewAI, LangChain, Python, TypeScript

Background

My foundation is over twelve years of enterprise data engineering across financial services and telecommunications — building ETL pipelines, designing data models, leading database migrations, and developing real-time KPI dashboards at scale. That experience gives me something many AI engineers lack: a deep understanding that even the most sophisticated models are only as good as the data architecture underneath them.

I see AI agents not as black boxes but as data systems requiring careful orchestration — ingestion, transformation, storage, retrieval, and presentation. My ability to bridge traditional data infrastructure with cutting-edge AI implementation means I deliver production-ready systems, not proof-of-concept demos.

Let's Build Something Remarkable

I'm focused on helping forward-thinking teams deploy intelligent systems that deliver measurable business outcomes. Whether it's automating complex workflows, building conversational AI interfaces, or designing the data architecture that makes it all work — I bring the engineering depth to take projects from concept to production.

Get in touch: james@ethicalaispecialists.com.au | ethicalaispecialists.com.au